New AI Can Generate 3D Heads From a Single Picture

Published on Thu Sep 14 2023 The Two of Us | Enoch Leung on Flickr

The Two of Us | Enoch Leung on Flickr

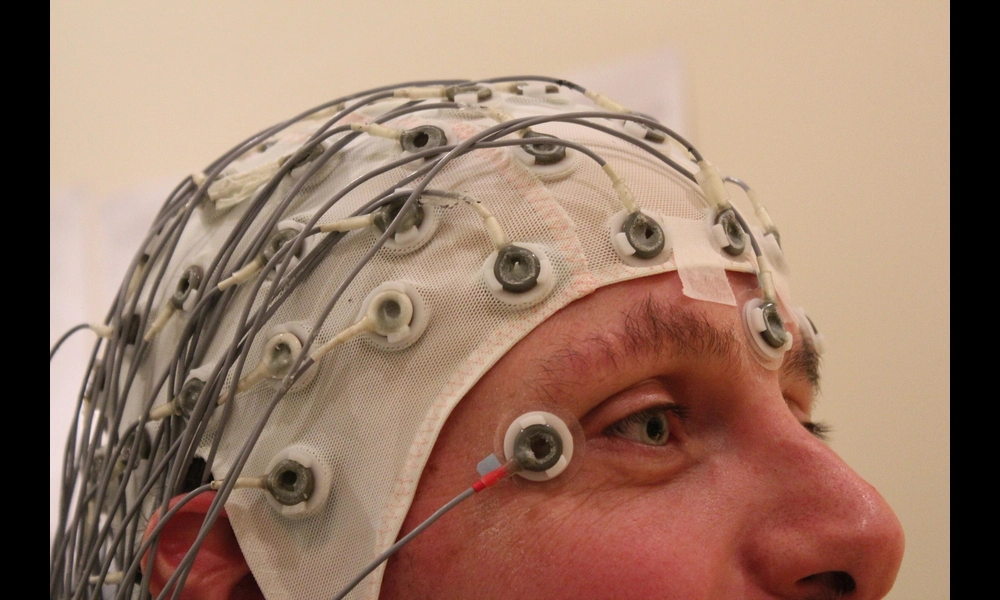

Researchers have developed a new method for reconstructing 3D models of human heads from just one or a few photographs. This method, called Multi-NeuS, relies on neural implicit functions, which are based on neural networks and allow the model to learn prior knowledge about human heads directly from data. The model is trained on a dataset of smartphone videos, eliminating the need for scanned 3D data. After training, Multi-NeuS can fit novel heads in few-shot or one-shot modes with impressive results.

The value of this research lies in its potential applications in various industries, including filmmaking, augmented reality, virtual reality, extended reality, and gaming. Automatic acquisition of accurate 3D models, without the need for labor-intensive manual creation, can save time and resources while enabling more realistic and immersive experiences. Multi-NeuS offers a data-efficient solution, capable of learning a generic human head model from a hundred short smartphone videos and producing convincing textured 3D head meshes from a single photograph.

However, the researchers also acknowledge some limitations of their approach. The training dataset is relatively small and lacks diversity in terms of hair styles, adornments, and skin types. The camera in the dataset only captures the front and sides of the head, leading to poor reconstructions of the back of the head. Further improvements and simplifications to the underlying architecture are needed to address these limitations and improve the model's ability to fit occluded regions accurately.

Overall, this research represents a significant step forward in the field of 3D head reconstruction. By leveraging neural implicit functions and training on a small dataset of smartphone videos, Multi-NeuS demonstrates the potential to create high-quality 3D portraits from just a few photographs. The findings of this study offer insights into how transfer learning can be applied to implicit shape and appearance modeling, paving the way for future research in this area.